I shipped AskUserQuestion in 2012. We called it AP EECS.

10 min read • 1886 words

Context

In Fall 2012 — my last semester at Michigan — my best friend, ex-roommate, and fellow CS student Mike Varano and I did an independent study under Dr. David Chesney. EECS 499. We built an iPad app called AP EECS.

I wrote about the motivation for it back in 2012 — the short version was that neither of us had access to any computer science in our high schools, that the AP CS exam was being redesigned to be more theoretical and less Java-syntax-heavy, and that we wanted to build something teachers could plug into an after-school program. The app was the artifact; the dream was bigger.

The app shipped on December 17, 2012. The repo went public on December 18 — one day after I submitted my final paper, one day after my last day as an undergraduate. It has not been touched since May 2, 2013, when Mike pushed a small README cleanup.

The repo is here. I'm leaving it public.

This post is a look at what was actually inside it.

The shape

It was an iPad app, written in Objective-C, targeting iOS 6. Storyboards weren't yet The Way Apple Wanted It; we did everything in XIBs. Auto Layout existed but was new and we didn't trust it. Swift didn't exist. There is exactly one Storyboard file in the repo and it's effectively empty — every screen is a XIB.

The high-level architecture is a four-controller iPad UI:

APEECSMasterViewController— the home screen. Shows the current course title, gives you buttons for Topics, Study, and About.SelectATopic— picks one of the topics defined for the current course.QuestionAsker— a custom subclass ofUIPageViewController. The user swipes left/right between questions; this controller manages the swipe and instantiates the right question subclass.StudyView— the embedded study guide for browsing material instead of being quizzed.

Plus an APEECSAboutViewController with a text field where the user enters the URL of the course they want to load.

The XML-driven content model

Here's the part that holds up. AP EECS isn't really an AP EECS app at all — it's a generic question-asking shell. All content lives in an XML file at a URL the user pastes in.

The app fetches <base_url>/input.xml. That XML defines the entire course:

<topiclist>

<topic n="Basics">

<question type="xchoice" q="What is the benefit of representing data using binary?">

<response>Can represent data using bits</response>

<response>Everything has two states: true and false</response>

<response>Depiction using 0s and 1s</response>

<response correct="true">All of the above</response>

</question>

</topic>

<topic n="Binary">

<question type="bin" toDec="true">128</question>

<question type="bin" toDec="false">7</question>

</topic>

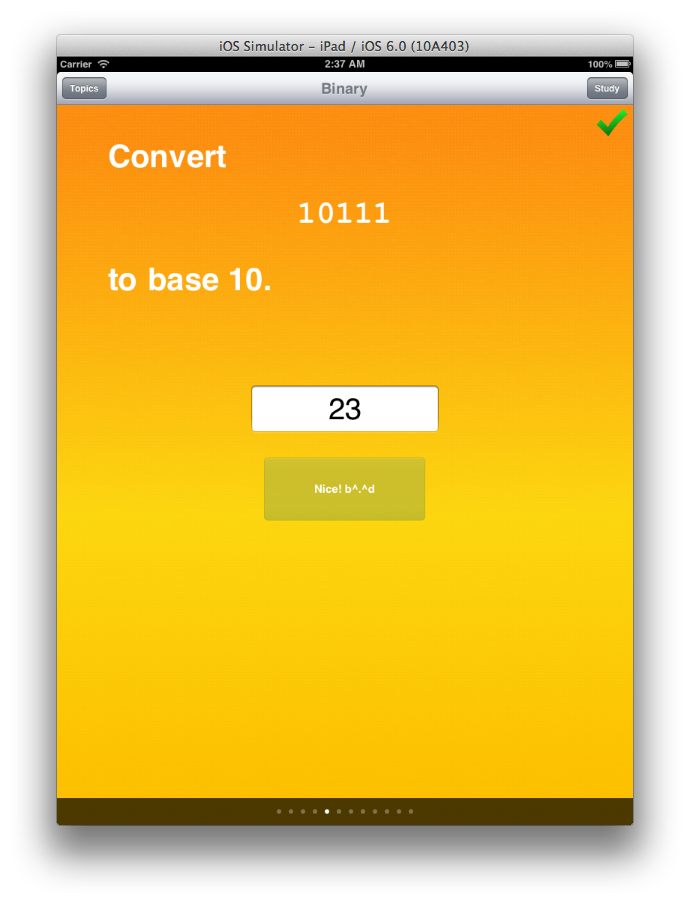

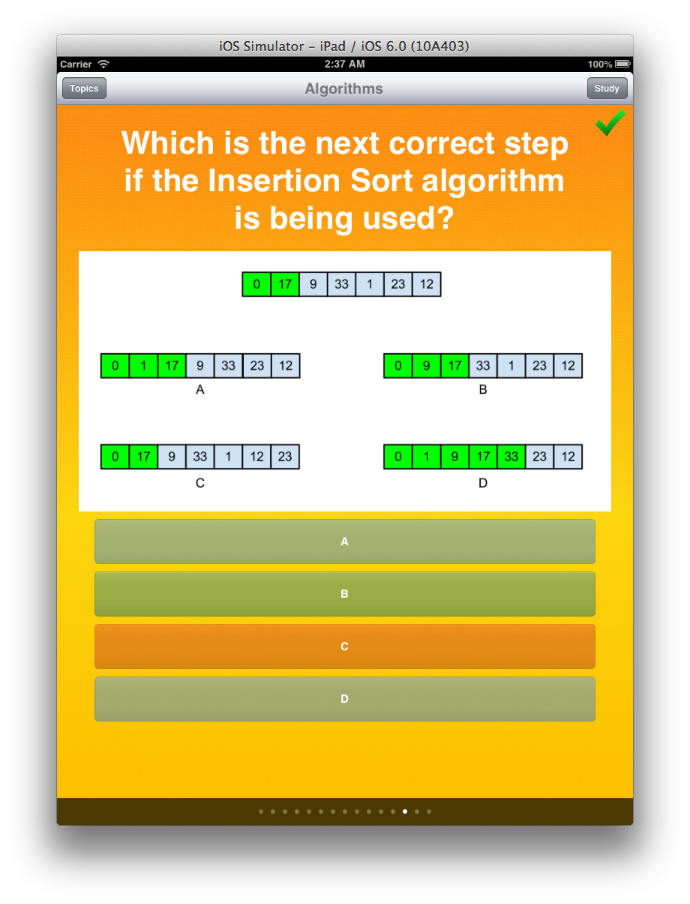

</topiclist>There are two question types in the shipped binary: xchoice (multiple choice) and bin (binary-to-decimal or decimal-to-binary conversion drills, since the AP EECS exam tested that). But the architecture is open: drop a new XIB and a new QuestionBaseClass subclass into the project and you've added a question type.

The repo ships two sample courses: the actual AP EECS content built with Dr. Jeff Ringenberg's EECS 101 slides, and a "sample" course about Michigan football — there's a question that asks how many Heisman winners Michigan has had, with the right answer being 3. (The football course was the unit test: if a course about Heisman trophies and "Hail to the Victors" rendered the same way the EECS content did, the architecture was actually domain-agnostic. Also: Go Blue.)

Persistence

User progress — which questions you've answered correctly per topic per course — was stored as a three-level dictionary in a plist:

courseUrl → topicIndex → questionIndex → wasCorrectIndexed by URL because users could switch between courses, and we wanted progress to persist per-course. Every write read the whole plist from disk, mutated it in memory, and wrote the whole thing back. For a study app with a few hundred questions, this was fine.

QuestionBaseClass and the inheritance hierarchy

The question types use classical OO inheritance, which feels archaic in 2026 and felt totally normal in Obj-C 2012. QuestionBaseClass : UIViewController. Subclasses (XChoiceQuestion, BinaryQuestion) override a method to fetch their saved state.

When the user gets the answer right, the subclass tells its delegate (the QuestionAsker) and the page view controller advances. The header has a hand-written explanation of how the message-passing works because Obj-C's protocol/delegate pattern was a nightmare for someone who had spent the last four years writing C++:

When the inheriting class knows the user has their question right, it should call:

[(QuestionAsker*) self.delegate questionIsRight:[[[NSNumber alloc] initWithUnsignedInt: self.index] stringValue]];Which tells the QuestionAsker to mark the question as correct...

That ceremonial cast through (QuestionAsker*) so we could call a typed method on self.delegate is what duck-typing for typed-message-sending looked like in Obj-C. Modern Swift has protocol QuestionAskerDelegate for this exact pattern; we were a year and a half too early for Swift and a decade too early to be embarrassed about it.

What I'd do differently in 2026

The architecture, surprisingly, holds up as a sketch. The bones are right:

- Content comes from a remote source so courses can be added without app updates.

- Question types extend a base class so new types can be added cheaply.

- Per-course progress is persisted client-side.

What I'd change:

- JSON instead of XML. XML was already losing in 2012; we picked it because it was familiar from web stuff. iOS still doesn't have a great XML parser; it does have

Codableand a greatJSONDecoder. - A course-builder companion app — but keep the URL field. The decentralized URL-driven content model is the part of this app I'm proudest of in retrospect. No accounts, no backend, no central authority that can deprecate or shut down a course. The course outlives our infrastructure. What we lacked wasn't a backend; it was an authoring tool. A small Mac or iPad companion app that let a teacher build a course visually and export an

input.json(orinput.xml) — that's the missing piece. Pair it with the existing "paste a URL" deployment surface and you have a complete content lifecycle: a teacher builds the course in app A, hosts it anywhere they want (Dropbox, GitHub Pages, school server), and points app B at it. - SwiftUI. The XIBs are not aging well. SwiftUI's declarative model would compress the four-XIB UI into about 200 lines of Swift. The question-type extensibility would become a

protocol QuestionViewwith concreteViewtypes. - Course caching. This is in the README's Future Features list — load over the wire on first open, then run offline. Never got to it.

- Background image loading. Also in Future Features. We were freezing the UI thread loading remote images for image-bearing multiple-choice questions, then noticing the freeze was bad, then writing it down for someone else to fix. That someone else turned out to be no one.

The thing that's interesting in 2026

Re-reading our XML schema in May 2026, after a year of working with LLM agents every day, I noticed something that wasn't visible to past me: this is a tool call.

apeecs's question schema, in XML:

<question type="xchoice" q="What is the benefit of representing data using binary?">

<response>Can represent data using bits</response>

<response>Everything has two states: true and false</response>

<response>Depiction using 0s and 1s</response>

<response correct="true">All of the above</response>

</question>The same shape, in the JSON schema for AskUserQuestion — the tool Claude reaches for in my Claude Code sessions whenever it needs me to pick from a structured list of options:

{

"question": "What is the benefit of representing data using binary?",

"header": "Binary",

"multiSelect": false,

"options": [

{ "label": "Can represent data using bits", "description": "..." },

{ "label": "Everything has two states", "description": "..." },

{ "label": "Depiction using 0s and 1s", "description": "..." },

{ "label": "All of the above", "description": "..." }

]

}And the same shape with the syntax stripped — what a teacher would scribble on a whiteboard:

TYPE: multiple choice (one answer)

PROMPT: What is the benefit of representing data using binary?

A: Can represent data using bits

B: Everything has two states: true and false

C: Depiction using 0s and 1s

D: All of the above ← correctSame shape. Typed prompt. N labeled choices. A field that distinguishes the question type (type="xchoice" / multiSelect: false / "one answer"). A runtime that loads the schema and renders an interaction. The differences are cosmetic — Mike and I picked XML because it was familiar from web stuff in 2012; Anthropic picked JSON because that's what AI agent infrastructure speaks now; a teacher writes it on a whiteboard because that's the lingua franca of "I have a question and I want one of these answers." The deeper structure is unchanged.

"Pick one of these options" is now the foundational interface between humans and AI agents. Claude Code, Cursor, and every other coding agent shipping today calls AskUserQuestion (or its equivalent) constantly — every time the agent hits a decision it doesn't want to make alone, this is the primitive it reaches for. The mode used to be peripheral: a survey form, an iClicker poll, a Typeform sidebar. With LLM tools, it became central. It's how you stay in the loop with a model that's making dozens of small decisions a minute on your behalf.

apeecs accidentally landed on the same primitive in 2012, in a much smaller scope — same prompt, same options, same correct-answer marker — because when you boil "ask the human to pick one of N choices" down to its bones, this is what falls out. Mike and I weren't building infrastructure for the next dominant human-AI interface. We were building a study app for one specific AP exam. Turns out the schema we sketched in service of that narrow goal is the same shape that, fourteen years later, runs the inner loop of every conversation between a human and an AI agent.

If we'd open-sourced the schema in 2012, divorced from the iPad app, it would still be useful in 2026. The iPad app went to bed and never woke up. The data shape is timeless.

What never happened

The 2012 post ends with: *"I am in talks with a professor at the university about turning the source code over to Michigan for continued development."*

Nothing happened. Mike and I graduated, started jobs, and the conversation died on its own. As far as I know, no version of this app was ever used in a Detroit Public Schools after-school program. We wrote a final paper, got our credit, and the artifact sat where it sat.

That's the honest part of any senior project: the dream is bigger than the execution. The execution is six XIBs, two Question subclasses, and a fully working iPad app that does, in fact, ask you questions about whether the right answer is "All of the above."

It was a great project. I am leaving the repo public.

Someone should vibe code an AI interview framework based on the extensible principles of apeecs.